“So let us begin anew–remembering on both sides that civility is not a sign of weakness, and sincerity is always subject to proof.”

John F. Kennedy’s inauguration speech in January 1961.

This could be a blog post with a very short shelf life. As we wait for results to start coming in from today’s general election in the US, I’m reminded of Kennedy’s inaugural speech in 1961. Kennedy was speaking about Cold war divisions here, but I think his words have peculiar resonance in relation to the opportunity for change, healing divisions, rounding a corner, beginning anew in the current domestic situation.

I think most of us would agree that the most significant casualty over the past four years has been political trust. The decline of trust did not start in November 2016. From the early 1990s, New Gingrich developed a destructive partisanship that almost inevitably led to Trumpworld. He created conspiracy theories, engaged in strategic obstructionism, and sought to use the so-called ‘culture wars’ to destroy bipartisanship and create disfunction in Washington. Since the start of his career as a Republican activist in the late 1970s, Newt Gingrich called for Republicans to act “nasty”, in what he called a “war for power”. This was the so called Republican Revolution: to destroy trust in the system and divide the electorate along what Karl Rove, Bush’s key strategist, would later call wedge issues – mostly what we might call progressive social change like abortion, marriage equality, and broad civil rights agendas.

It is perhaps no surprise, then, that Gingrich has been one of Trump’s big supporters. Trump’s nasty, name calling, personal attacking strategy is straight from Gingrich’s playbook. Rather than draining the swamp, his golfing, Fox-watching, late night tweeting, lying, obstructionism has done exactly what Gingrich wanted: made people sick of politics, mistrust Washington, buy into completely ludicrous conspiracy theories, opt out completely.

It was Gingrich who turned legislating into a reality show. With C-Span cameras installed in the House, Gingrich became a performer in his own political reality show. He repurposed the term ‘communist’ as an insult. The Trump supporters who accuse Harris and Biden of being socialist have, for the most part, no idea what that term actually means; they get their insult handed down from Gingrich, through Rove, through Palin, through Trump. Gingrich even wrote a memo about language use: in the late 1980s, his “Language: a Key Mechanism of Control” encouraged Republicans to call Democrats “radical”, “traitors”, “corrupt”, “socialist”. He weaponized impeachment – again an opportunity for reality tv – and he weaponized supreme court nominations in new and nefarious ways.

If Trump wins in 2020, it will be another triumph for Gingrich, and Gingrich’s successors like Mitch McConnell. We’ll spend four more years going around and around in a spiral of lies, obfuscation, maladministration and distraction, while the foundations of democracy are hacked away, live on tv. I am usually not that melodramatic. But Trump didn’t start this – he is a symptom of it. I’m not sure if a return to civility and sincerity is entirely possible after four years of Trump, but it certainly won’t be after eight.

The high turnout figures being reported this week give some pause in this. Not to indulge too much in counterfactuals, or “what if” history, I do wonder whether this high level of engagement would have been the case if we weren’t dealing with a pandemic. I’m sure the political scientists will be analysing the ways in which the coronavirus influenced political engagement over the past months. And they’ll be able to gauge how much voters register the origins of their political engagement through racial polarization, reactions to police brutality, and the Black Lives Matter movement. Democrats, and those who lean Democrat, do not trust the government. Melania voted maskless. Do you trust the masked people or the unmasked? Do you trust Fox news or MSNBC? A good friend of mine told me yesterday that in relatively affluent parts of Philadelphia, the drugstores and other shopfronts have been boarded up for the past couple of weeks. There is no trust that civility will return, no matter who wins.

All the norms appear to be gone. Disinformation has eroded all trust.

The New York Times editorial today headlines: “You’re not just voting for President. You’re voting to start over.” “The American experiment has taken a beating, but there’s a chance to renew our democracy”, the editorial tells us. But Republican activists in Texas are still – even now, even after several court cases – trying to throw out 120K votes. Voter suppression is real. It’s not clear that democracy can be renewed.

What do Biden and Harris represent? Contrary to the Republican hype, they are about as middle ground as you can get. I like Kamala Harris a lot, but you might suspect that if she were a politician in Britain, she could easily have been one of David Cameron’s so-called compassionate conservatives. It’s telling that one of Biden’s last pre-election tv ads was voiced by Bruce Springsteen – a symbol of good old fashioned solid Born in the USA American values. Whereas Biden came into the VP in 2008 on the Obama ticket of Hope and Change, he’s now essentially running on a ticket of God, Can We Rewind the Clock to Civility and Sincerity? Less catchy.

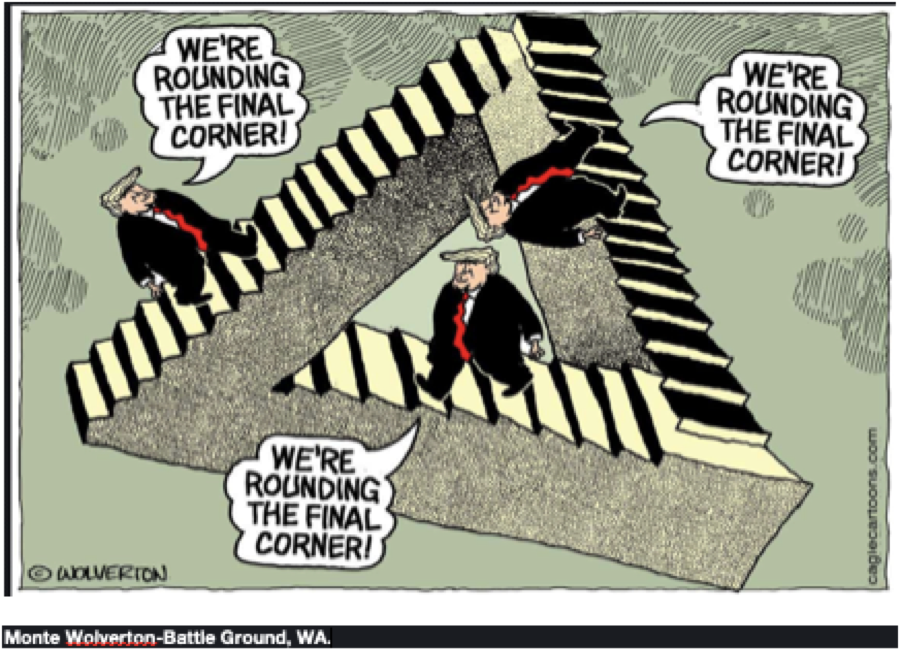

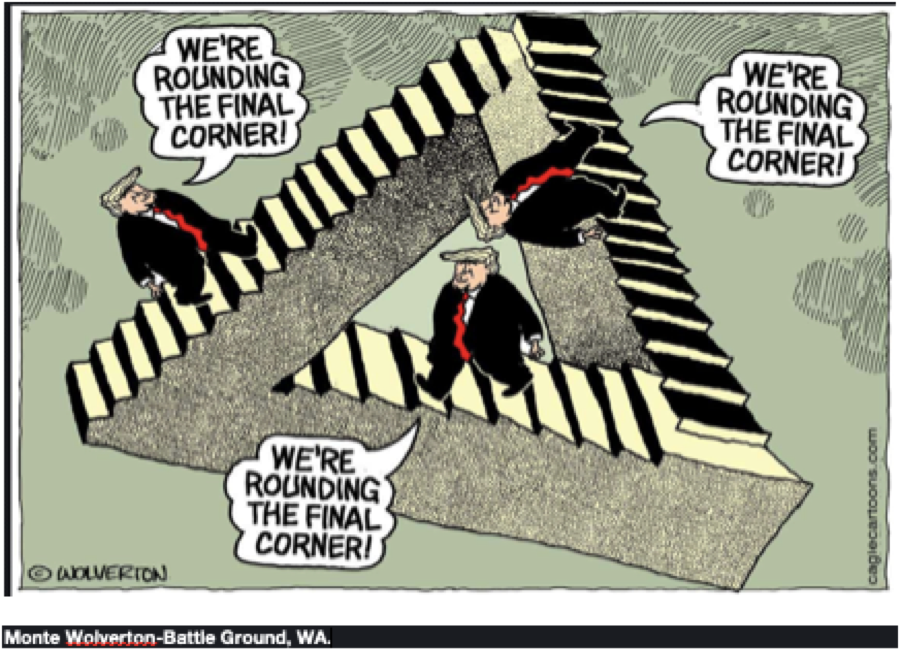

When I was a child, and I would go for a walk with my grandmother, I would start to whine when my feet got sore and I didn’t want to walk any more. She would always tell me the same thing: just a little further. It’s just down the road, and around the corner. Oddly, this is what this Trump cartoon reminds me of. We’re just rounding the corner. Except, like Trump, my grandmother was always lying. Will the US round the corner this week?